Coding is a thought-consuming task and that makes the utilization of the brain very high which leaves little space for anything else including other coding task. So, if programmer has another task that come up in the middle of doing a coding task, it is most likely that he will get overwhelmed.

I think this is where the perception that programming is hard comes from, at least one from many other reason. There are just so many things that should be put in the brain's RAM. There are lots of abstraction and associations to keep in mind at the same time. However, knowing this we could make it less hard by not putting too much of it in our brain.

Let's illustrate a little. Let's say thought capacity of our brain is a 100. If a thought-consuming task takes 80 then we only have 20 left, so there's no way another 80 point task could fit in there or anything not under 20 for that matter. To make things flowing, just keep the brain from being too occupied. Keep what's in it within manageable (and fun) amount. In short, keep it sustainable.

We then need to put the surplus aside first and this is why programmer need system and discipline to do this in effective manner. I don't think we can do middle to large programming task without some kind of checklist to keep the subtask in order and cath the emerging tasks along the way. We need to only allow stuff related to current "theme" in our head and push others out of it immediately to other system before our brain start the chain reaction of spawning the emerging thought (or distraction) into someting that disrupt or flow of thinking. We could revisit this buffer afterwards, prioritize and pick the next task.

The system could be pencil and paper, text editor or, in my case, mind mapping application. It doesn't matter as long as you can depend on it as thought-catcher i.e: simple, instant entry and keep your mind clear on one single task.

There's one "sacred" principle here : There should always be task. If the task, when you work on it, proven to be insignificant or false or you find that it should be something else, do something about it first before doing something else. Cross it, rename it, whatever as long as you don't just uncounsciously forget it. One forgetting could lead your mind to wandering state and after some unproductive time you end up with "mmhh, what am I trying to do just now?". Don't let our mind do the thinking just for the fun of it. We are not maintaning our mind, give it good food and rest, just so it can have fun by itself aren't we :).

I find that being really strict to just doing one, relatively small, very concrete, well-defined thing at one time help in get more programming task done (meaing more feature added, bug fixed, etc... as oppose to more mess :) ).

Wednesday, November 26, 2008

The Need to "Catch" Emerging Task While Coding

Diposting oleh

Hafiz

di

Wednesday, November 26, 2008

0

komentar

![]()

Label: coding

Sunday, August 17, 2008

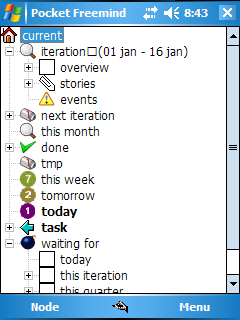

Pocket Freemind, Continuing After 0.5 Release

Below are my updates on Pocket Freemind project, after the last 0.5 release. I just code away for a while, seeking to see how some ideas will be in concrete form. Now it seems apropriate to take a break and write some notes and update about it.

- The extraction of libPocketFreemind and new-more-modularized GUI. I've extraced the object model and start a new GUI on top of it to allow more flexibility to enhance more the user interface. The original GUI is still intact sharing the same libPocketFreemind (which extracted from it relatively painless) .

- Soft-key menu is back, complemented with custom toolbar. Standard .NET CF toolbar makes the standard soft-key unusable so I make the custom toolbar that does not interfere with it. I use picture box, panel, imagelist and a little hack. Hopefully user would still feel it as a toolbar :).

- Individual color picking instead of predefines set of style. There is now color picker for node's font and background color. Thanks to TamPPC for the code. Node style would be revisit later with editable style list.

- Dialogless note window. It use splitter to share screen with the map's View. It is now looks more like Freemind on the desktop. I think this way the note can be more useful and more comfortbable to enter

- Icon dialog updated. It now has (emulated) upper right close window button

- More align with the desktop version. The menu, term, bahavior use the desktop version for reference as long as it still makes sense. The special case of pocketpc should be considered too of course.

The new note view with resizable splitter. It synched as the node is navigated. There is the node with individual color and background too.

The above is the new look of icon dialog.

And here is the new color picker. The base code is in vb. I wrapped the code relatively as it is in user control (adding an event handler), put it in a dialog, add a preview, and use it in c# project (the benefit of multilanguageness of .net :) ).

Thankfully, it came at the right time, there used to be only commercial color picker component around.

Diposting oleh

Hafiz

di

Sunday, August 17, 2008

0

komentar

![]()

Label: coding

Wednesday, July 16, 2008

Using JEdit for AutoIt Development

I work with AutoIt today and as a JEdit user, I feel reluctant to install another Text Editor just for it. So, I searched around for JEdit-based solution. Some searching around later, got me this link. It is actually a frame from this site, I shortcut it here since it's not that obvious if you go from the main page.

It took time to follow all the instruction, even to the point I doubt whether this is all worth it :). Hopefully it will be integrated with JEdit's built-in plugin manager later for seamless installation. However, it works nicely in the end and my JEdit setup now very comfortable to work for the task.

Diposting oleh

Hafiz

di

Wednesday, July 16, 2008

0

komentar

![]()

Label: application

Using Custom Action in Visual Studio 2005 Deployment Project (under Vista)

There are some quirk using Custom Action in Visual Studio 2005 and if your development machine is Vista you have even more trouble to sort. Here are what I faced recently :

- Unhelpful error message. See : "Windows Installer fails on Vista with 2869 error code.". In my case, I just run the resulting installer in an XP machine to get more informative message.

- When you add Custom Action for Commit phase you need to include your Custom Action Library to Install phase although you don't do anything on it e.g: override it. If you don't do this it you will have error can not save state or something. More about it here.

Diposting oleh

Hafiz

di

Wednesday, July 16, 2008

0

komentar

![]()

Label: coding

Thursday, July 10, 2008

Using Directshow in .Net : DirectshowNet vs. C++/CLI

There are two usable option for using Directshow in .Net so far (excluding the abandoned-managed-directshow) :

- Purely in .Net using DirectshowNet : code -> directshownet

- Get a little bit dirty using C++/CLI : code -> c++/cli bridge-> directshow

- You don't need to translate between what's on the directshow manual with what you need to write. On many cases you could just paste and code many boiler plate code as is and it just run. Using directshownet you have to do some mental gymnastic to do it.

- Debugging would be more comfortable in native language.

- You can hide the complexities of Directshow/COM exclusively in native code and have your managed code stay clean. You'll most likely end up with three layer modularisation : .net code -> c++/cli -> native c++ that access directshow. In the middle layer (c++/cli) you could provide your managed code with clean interfaces using native .net construct e.g: Properties, Event Handler.

To sum up : You'll do better using C++/CLI when :

- You already familiar with C++

- Want to make application rapidly utilizing .Net

The better option from all these would be a .Net library that encapsulate Directshow in a more .Net-friendly way, not just a collection of "typedef"s. An even better one would be a much friendlier multimedia framework (assuming currently directshow's existing codecs and filters ported there too :) ).

Diposting oleh

Hafiz

di

Thursday, July 10, 2008

6

komentar

![]()

Label: coding

Tuesday, July 08, 2008

Summarizing (Heavily) the Use of Event Handling Framework in .Net

I have been doing lots of code using callbacks recently and it happen to be in .Net (C#). I need some time to get used to the term, conventions and helpers that is available in .Net but in the end it is actually quite convenient with lots of predefined construct available.

Here's to sum things a little :

- For "raw" callback there's delegate. It's only a little higher than C's function pointer i.e: provide type checking.

- Above it there's event construct. It restricts delegate so the client can only add and remove itself to it but not others. Having that restriction it can now be viewed as broadcaster-subscriber model implementation (or signal-slot in qt term).

- Now, it gets more interesting : there are predefined Event Handling Framework that can be used so I can save some typing and maintenance. For typical purpose, you could use default non-generic version. What you need to do is declare the Event on the broadcaster :

public event EventHandler SomethingHappen;

and on the client, make the handling function based on the predefined argument pattern,

private void OnPlayFinished(Object sender, EventArgs e) { ... do work... }

and subscribe it somewhere :

broadcasterObject.SomethingHappen += OnPlayFinished;

This is what being used throughout WindowsForms-related code in VisualStudio that we could use for our own purpose too.

Diposting oleh

Hafiz

di

Tuesday, July 08, 2008

0

komentar

![]()

Label: coding

Wednesday, July 02, 2008

Get Current Position On Encoding in Directshow

When doing encoding in directshow, getting the current position (needed when you want to show progress of current encoding process) is not as obvious as for playing. I try to use the code from playing operation first i.e: get IMediaSeek interface and do GetDuration and GetCurrentPosition, but the progress is very inaccurate. The graphedit behave inaccurately also.

I found the solution later after some browsing around in Directshow help. To summarize, the solution/explanation :

IMediaSeek you got from Graph only accurate for playing operation. For file related e.g: on encoding operation, get IMediaSeek from AVIMux instead of the Graph.The rest are the same with playing.

Diposting oleh

Hafiz

di

Wednesday, July 02, 2008

1 komentar

![]()

Label: coding

Sunday, June 08, 2008

Children and Human's Low Level API

One of the privilege you got from having children (I have two myself) is you got the chance to see layers of human behavior API. It is exposed incrementally so you got the chance to differentiate which one is inherent to every human, what is character. If you have more than one you have even greater chance to compare things and search for invariants.

I see for myself how certain characteristic are already within a person without us do anything special to develop it. Being very attentive or very extrovert is shown in babies even when we don't condition them to be (I always thought this was very conditioned). Of course, later you can teach them and manage some of their trait to be more effective but inherently they are already have certain tendencies.

The most interesting thing for me is to see how we learn. It's really incredible to see how children learn and reflect on how we ourselves learn. You will see how those principles in AI books come from. I think you could actually subtitle any AI text-book as "how babies learn" :).

In physical level it is quite the same too. You see how those hair, teeth grow and how the faces change and shape up. Watching them learn how to walk is fun and refereshing and you see how those muscle strengthen.

It's probably a little inhuman to call it API, you could say it instead : if you'd like to learn and know more about yourself inner-workings, have a children.

Diposting oleh

Hafiz

di

Sunday, June 08, 2008

0

komentar

![]()

Label: miscellaneous

Saturday, June 07, 2008

Programmer Maturity and Estimation Skill

How do you measure a programmer maturity?. Recently, I am beginning to think that it is reflected on how they estimate the project and task that they need to do.

Programmer that only work on small number of code and problem tend to highly overestimate or underestimate the complexity of a project/task. So, mature programmer is not (just) someone who could code, and estimate, very good and precise in well-known problem using language that he knows well. A mature programmer would also :

- know quite clearly what he need to do/learn/research on matters that he is not used to

- how much/how certain/uncertain the time to take to tackle it, substask and trouble that potentially come up

- make clearly-thought estimation, and most importantly

- not emotionally attached to a problem which means not knowing or estimate something as long/hard/unknown/impossible is nothing to be embarassed/defensive about instead it's for the best if everyone being clear on what can and cannot be done

I think it's the same as maturity in life, you are less needing of approval/praise or trying to satisfy/impress anybody too much and more focus on doing what you can do best and keeping your integrity checked.

It does not mean we need to avoid the unknown and avoid adventure, but being mature means being clear of it and not promising something beyond our integrity to accomplish it. Say something is risky when it is and let the stakeholder decide (and share consequences later), say something certain when you have a reference/experience of it and ready to take consequence if you are proven to be wrong (you learn something new anyway).

Diposting oleh

Hafiz

di

Saturday, June 07, 2008

0

komentar

![]()

Label: agile

Running .Net Winforms Application in Mono

I am curious to try running .Net Winforms application for several reason :

- Most portable GUI-based .Net app is written not using Winforms but something else e.g: gtk.

- Want to see how far the Mono team in dealing with GUI application so far (I have used server application written in .net in mono but no winform application yet).

- I have written pure and relatively simple/common winform application that supposed to run in any "good enough" winform port. Before it, my use of winforms had to goes into win32 specific "hack" which less likely to run in non-win32 .Net port.

mono quicktask.exe

and the application "magically" run. Screenshot below :

It's the quicktask app from my previous post, so you could compare with the screenshot on that post. There's only some odd pixels in textbox borders but beside that, things are running fine.

Now, as long as you don't use anything strange with winform, it seems to be quite a portable development platform now. Pretty convinient if you would like to stay developing in winform or already have a codebase in it and want port it to platform that can run mono.

One note though, if you have more need like more integrated look and feel you probably want to look other alternative e.g: gtk sharp, since currently winform in mono feels like the early java gui toolkit i.e: it uses it's own rendering instead of using native widgets. It's good enough to make things run but probably won't impress too much consdering how user interfaces is getting shinier these days.

Diposting oleh

Hafiz

di

Saturday, June 07, 2008

0

komentar

![]()

Label: coding

Friday, May 23, 2008

QuickTask for Snappy Task Addition on ICal-based calendar

I have been coding little application to make adding task in iCal fast and comfortable. I finally have a binary release today. Check out QuickTask here.

I have been coding little application to make adding task in iCal fast and comfortable. I finally have a binary release today. Check out QuickTask here.

I find entering task using standard dialog provided by General Calendaring application quite uncomfortable. The dialog feels very "noisy" to me. I understand that most of them aim to enable user utilize ical-file standard as much as possible but for my usual use it's too bloated.

I made quicktask to make entering task to calendar more fit to my task-adding style and many others I think, especially GTD-follower who don't bother with dates, times and prioritizing task (at least when entering them).

It assume and simplify several things :

- You only assign one task exactly one category

- You only need to entery task name, description

I have been using for sometime now and hopefully it will be useful for others too.

Diposting oleh

Hafiz

di

Friday, May 23, 2008

1 komentar

![]()

Label: application

Friday, May 16, 2008

Do "svn info" the TortoiseSVN Way

It's not directly obvious how you can do svn info from TortoiseSVN. I used to install separate command line svn client just to do this. However, I stumble upon this discussion :

> how could i find infos like command "svn info" ...

Right-click, choose "properties" in the explorer context menu. Then,

switch to the "SVN" tab.

Here's what I got while doing it :

I think it's pretty nice and easier compare to using separate command line client with which you need to go to console, go to that directory and type "svn info" (not to mention the hassle of installing the svn command line client). It's not that apparent for people who used to the command line command though although it seems quite consistent with how explorer arrange things i.e: Properties dialog is where the info about the selected item shown.

However, I think having some way in the main context menu of TortoiseSVN to show this info will feel straightforward and and natural for some people. info command is used just like update, commit and other typical command so they would normally expect to find it on the same place where they see those other common command.

Diposting oleh

Hafiz

di

Friday, May 16, 2008

0

komentar

![]()

Label: application

Thursday, May 15, 2008

Use AnkhSVN to Avoid Forgotten File Addition in Visual Studio

If you use Subversion with Visual Studio chances are you have been in a situation where a file you added in VS and you forgot to "svn add"ed it, thus not committed to subversion. Things will still be ok on your side, you probably only aware of this when someone else shout "Hey, who broke the build ?!".

It's where the AnkhSVN come in. I still use TortoiseSVN for my main svn client but I also use AnkhSVN to avoid the case above. When I add stuff, it automatically "svn add" it. Another important use of it for me is the instant review of what's changed from within VS and the simple way to revert changes without having to go to the windows explorer and access TortoiseSVN from there.

In short, I think it's a good complement for main svn client although not to replace it.

Diposting oleh

Hafiz

di

Thursday, May 15, 2008

0

komentar

![]()

Label: application

Wednesday, April 30, 2008

Setup Kubuntu 8.04 KDE 4 Remix on Toshiba Satellite 2450 Series

KDE 4 is finally released officially in Kubuntu 8.04, so it's time for me to reinstall stuff in the laptop (Toshiba Satellit 2450). Recently it's been running kubuntu 7.10 with 3d card fail to configure and I have no patience/time left to fix it. It was working in previus kubuntu, so I have a good hope that things will be better this time plus I have been itching to test KDE 4 (I had been staying away from previous betas). So, here's the recap.

The installation went really smooth. There's nothing new with ubuntu/kubuntu here, it's been known to have trouble-free installation process (although it's been known to cause a little pain afterwards :) ). Restart then login shows a welcoming KDE 4 experience, quite slick.

Now, the fun begin. The network is not working and there's no graphical setting that I can use anywhere to set the ip and stuff. It's been quite well-done in KDE 3-based system-settings, so this is quite a major drawback. A little googling "inspire" me doing things manually below :

put this on /etc/network/interfaces

auto eth0

iface eth0 inet static

address ...

gateway ...

netmask ...

network ....0

broadcast ....255

and this in /etc/resolv.conf

nameserver ...

nameserver ...

and finally execute this manually (and put in /etc/init.d/rc.local for auto-execution in booting)

route add default gw ...

Once the network works, it then quite typical fix, upgrade, install, remove, etc.. .

The next surprising thing was Firefox looked really really ugly. I remember this is the same case with the early days of kubuntu, the gtk use default look and feel (which is very bad). Easy enough, there's gtk-engine-kde4 to the rescue. Installing and setting it through SystemSetting->Appearance fixed it.

The next worth mentioning is about nvidia proprietary driver installation. I am a bit skeptical due to previous experience. But again, another round of googling, "inspire" me again (since I am not exactly doing what's in it nor the situation exactly the same).

In my case, it actually much more simpler (no need for Envy). There's a proprietary driver ready to be enabled (downloaded and configured) in the system. It's on DriverManager accessible form the StartMenu. Just need a click in enable checkbox and it automatically download and configure it. However to get it working, it still need one line in "Screen" section in /etc/X11/xorg.conf :

Option "UseDisplayDevice" "DFP"

After video card acceleration working, the rest of the time is spent playing with desktop effects, which is quite interesting and fun.

Kubuntu 8.04 with KDE 4 sure is worth it, although I really think the Network Configuration matter is a very very major flaw. It put people who isntalling it in a very difficult situation to troubleshoot. In a system where it's not a problem (preconfigured successfully in certain system?) it could be fine, but not in many cases, I think. Besides with no network configuration GUI available to play with, there's so little hint on what to do next.

Diposting oleh

Hafiz

di

Wednesday, April 30, 2008

2

komentar

![]()

Label: system

Vista Firewall Block All Port Accesses Out of the Blue

I was working as usual when I suddenly cannot access the internet. Everybody else seems to have no problem with it. All my LAN access seems to not work either. I remember I got this case sometime ago and a reboot fixed it, but I have many work open on the desktop that I refuse to just give up this time.

Some diagnostic later, the result, strangely, was :

- ping works, both LAN and internet.

- Any access to any port anywhere fail. I can not even ssh to server in LAN that I usually able to access.

- Check the config, seems nothing wrong

- Turn it off, It works!

- Turn it on again, it still works ?!

Diposting oleh

Hafiz

di

Wednesday, April 30, 2008

0

komentar

![]()

Label: system

Monday, April 28, 2008

Menu and/vs Toolbar in .Net CF Development

Developing pocketpc application using .Net CF in Visual Studio gives you lots of easiness to get the job done but also give some not-exactly-expected result sometime. You feel like it's just another app development since everything looks familiar with desktop app development only with some stuff not available, but besides that things are feels like home for desktop developer. At least until you start playing something like toolbar.

In the beginning of development, you have this style of menu :

It fits with the use of device with common left and right action button.

However, after a while you most probably think about adding a toolbar for common operation. It will be the time you find out that you no longer have access to device's left-right action button since it will then looks like below :

The toolbar need to "share" space with the menu, so the shortcut buttons no longer makes sense . It happens the same way when you have three menu like below :

But I think for the toolbar it's not really "fair", since many would expect something like below :

The above is actually achievable with a "hack" with panel and picturebox. It gets the job done, but I wonder why it's not how the standard toolbar class behave. It probably has something to do with screen space matters. However, a better option would be to let the developer choose by providing option on where the toolbar can be located and let those who want to keep left-right button functional but still want the toolbar can get what he want.

I guess, for now, .Net CF developer would need to hack away his toolbar implementation if he would like to keep the standard menu. Otherwise, if it's OK for him to have the toolbar sharing space with menu or even desire it to save space for something else, then the using standard toolbar class would be quite pleasurable.

Diposting oleh

Hafiz

di

Monday, April 28, 2008

1 komentar

![]()

Label: coding

Saturday, April 26, 2008

Unit Test as a Documentation : The Case of PureMVC

I stumble upon PureMVC while searching for lightweight MVC framework for C#. The learning experience of it is not what I call pleasant one. The official documentation (which still based on ActionScript version) use very long narratives with very specific term used throughout the documentation assuming too much that the reader already familiar with it. To make matter worse, there's no executable code to try anywhere. There's no one to blame, of course, since the site already say :

Beta - Unit tests are compiled and running in target platforms, as well as a Silverlight Demo. There isn't much documentation yet, however.

However, things are changing once I take a peek at the existing unit test. This is exactly the experience that Unit-Testing proponent refer to when they say "Unit Test as an Executable Documentation". It is truly function as a documentation for me, even better than pages-after-pages narratives. It's like a "picture worth a thousand words" experience.

By relying on the unit test I can make things work in very short time. It also make the pdfs that I've read earlier starting to makes sense (despite I still think the narratives need much work to be humanely-readable). It explain how I should use certain class and how it interact with other class and most importantly, how it can produce the expected result. I don't even to compile and run them to know that it will works, it seems quite clear that it will work (in fact, I don't even bother compile the unit test, a little work of copy and pasting already show in short time that it work in my own code).

I rarely on the other side of Unit Testing world i.e: read them to makes sense of the code. I usually read my own or the co-woker (which the codebase I share and already familiarity with). Seeing their usefuleness from the purely-reader side (since it's truly "someone-else's" code) gives me deeper perspective on how they can make a difference in understanding and using the completely new code.

Diposting oleh

Hafiz

di

Saturday, April 26, 2008

0

komentar

![]()

Label: agile

Call Event Handler Function Directly [.Net, WIndowsForms]

When you code Windows Forms application using Visual Studio (VS), your event handling function are generated automatically. Things are handled visually.

But let's say you have made a Menu and make it's event handler function using VS and you want make a toolbar that do the same function. Since you hate recoding everything, you'd like to call the previous event handling function on the menu directly.

It's quite easy when you understand what those parameters generated in event handling function is for. Well, assuming you don't have time to read C# book from the beginning and you need to start coding C# right after you code C++ or Java with tight deadline ahead, you just try to make sense of the code at the spot.

Generally, those function would looks like below :

private void eventHandlingFunction(object sender, EventArgs e)

It turn out that the two parameter above is

- object sender : the object from which you call this function.

- EventArgs e : stuff related to what's going on when this function is being called.

- "I (the form that own this funtion) call this myself " and

- "There's nothing specific going on (that you need to know), I just need you to do the routine"

eventHandlingFunction(this, EventArgs.Empty);

Of course, you need to make the internal of the function can handle the situation with that kind of argument otherwise the caller need to provide a minimum necessary argument e.g: not just EventArgs.Empty, to get the function running.

Diposting oleh

Hafiz

di

Saturday, April 26, 2008

0

komentar

![]()

Label: coding

Friday, April 25, 2008

Incremental Adoption of Software : From Generic App to Specialized One

Most of our software need is actually can be fulfilled by many already existing general Application. Take Text Editor and Spreadsheet for example, they are very general but highly useful piece of software. You could use them to store any kind of list or database if you can still accept the very generic feel of them and awkwardness when things get bigger. You can even utilize folder structure, file naming convention, markup convention, built-in search and replace capability to "emulate" an app.

I think we usually underestimate on how far we can go with the already-existing software and too quick to grab specialized software. But in this age of so many existing option, with many even free, who can blame us?. However, we need to also realize that adoption has it's own hidden-cost/restriction/negatives that sometime more generic application has better performance e.g: IDE vs. Emacs cases.

So, to take the best of both worlds, I guess the heuristic would be :

- Being really comfortable with available generic application. To be concrete, master application like powerful Text Editor (I use JEdit, there are others who uses Emac's fanatically) and Spreadsheet. Use their advanced feature. You will encounter that when knowing them deeply you'll find many cases that they are just suffice.

- Browse around and keep an eye on less widely used but powerful generic application. For example, despite it looks to have a specialized use, I find Freemind really useful in a wide variety of situation.

- If it seems enough to use the generic one in certain case, try the generic one first rather then trying to find other more specialized app, you might find out that it is actually do enough.

- When things get rough and you really feel that you need a more specialized app, shop around and pick the one with the most generic functionality. For example, when you only need to edit picture and Paintbrush looks plain, something like Paint.Net would be more suitable than beating yourself up trying to be graphic designer by using Gimp.

- When you finally use the new app, push it to it's limits and see what else you could utilize from it. Later, you could mix and match your software based on what each can do. You will have less software to deal with but still get the job done.

From the perspective of developer, it is useful to see what existing software that already exist that already usable enough to solve the current problem you are trying to solve with developing this new software. Developing more specialized (or more robust) software will cost the user the utilities/familiarities that he/she has on the generic app so you'd better give something much better in return.

Diposting oleh

Hafiz

di

Friday, April 25, 2008

0

komentar

![]()

Label: miscellaneous

Thursday, April 10, 2008

Use Prism for Sites or Web Application that is Used/Behave as an App

Not so long ago I stumble upon Prism, a Mozilla project that aim to make using a site feels like using a desktop app. Of course, this means not all website would makes sense to be be used in Prism but for some it feels very natural.

Prism is (From the url above) :

Prism is an application that lets users split web applications out of their browser and run them directly on their desktop.

Altough technically speaking, currently it does :

- Gives you shortcut (in Start Menu, Desktop or Quicklaunch) so you can fire an url like you fire an app.

- Remove all the buttons and menu.

Many times when I open certain site in one tab and I intend it to stick there for a while, it will then accidentally closed, which is quite annoying since I would then find myself reopening tab several times (I am quite a fan of "Close other tabs" command, so I guess this is to be expected). Now, I could just open those kinds of sites in Prism and has more freedom to do more hectic research in Firefox.

It's still really early technology, but it's already useful in my case and quite assimilated . I am looking forward for updates on this one especially highly important stuff like the use of Firefox extension (or Prism's own extension) since something like Adblock is almost de-facto standard for web accessing these days.

Diposting oleh

Hafiz

di

Thursday, April 10, 2008

0

komentar

![]()

Label: application

Thursday, April 03, 2008

Visual Trick for Icon-less Node in TreeView [.Net, Windows Forms]

I have been spending my recent spare time to code more on Pocket Freemind. One of the addition/fix is on the handling of iconless node in TreeView (which is left blank by the framework).

As I wrote in my previous post that I used a square to fill it, although it does not look really representative. Later it was removed and leaving it blank, with the cost that the node looks like floating like below :

I later stumble upon this screenshots of "competing" product and think "Hey, I think I know how that is being done". It seems to use the standard TreeView and widget and there should be an image space between where the line and that text start, so there must be an image that mimic the line and make the line virtually longer and reach nearer to the text.

Some experiment with taking small screenshots of the line, copy and pasting with Paint.Net proved it right (or at least working the same way). It result in below :

Now, the line looks longer and better suit my taste.

Other people can have a different taste, of course. But in my case, and in the the context of mindmapping, I think it's better this ways since it can be quite confusing when I open a crowded mindmap and there's blank spaces everywhere making the nodes does not instantly apparent where it attached/connected to.

Diposting oleh

Hafiz

di

Thursday, April 03, 2008

0

komentar

![]()

Label: coding

Tuesday, March 18, 2008

Coding Precondition

I think there are certain conditions that need to exist for a programmer to start coding. This is the condition that if not fulfilled can make the programming task harder, unfocused, distracted and will produce mostly suboptimal result.

This is different for everybody and depend on many factors like exposure to available technologies, experiences, working environment. However, the same theme here is that those things need to be available before we can do main task with flow and minimum distraction.

Here's an example on the things that I find as a precondition :

- Roadmap. However vague, without it the coding feels like going nowhere, we are also have little structure to based our technical choices.

- Issue Tracker. Beyond coding for practice, you will need some kind of tracking of what you want to achieve, what you currently are and what the next step is. It could range from a mere text file or something monstrous, pick your choice.

- Revision Control. Coding without it will feels like coding in the middle of land-mines. The feeling of safety that if we make mistakes we could always mistake leverage the way our brain solve the current problem since it is now feel more free to experiment and explore solutions.

- Integrated Environment. It could be several tools that you combine yourself and make work together or a full-blown IDE or any other combination. The key is to make them work seamless as from coding to running and work naturally as the extension of your thoughts to concrete implementation. This is also include the libraries you are depend on, it should be in-place, tested and integrated first.

- References. Gather relevant core references of what you currently working on within short reach, it will speed things up compare to relying solely on just-in-time research.

Programming precondition worth to be prioritized whenever any of them is a little broken. Personally, I think it's worth to stop whatever I am doing to get those precondition work/clear in top shape first before start moving on again with the main work.

Diposting oleh

Hafiz

di

Tuesday, March 18, 2008

0

komentar

![]()

Label: coding

Thursday, March 13, 2008

PascalCase and camelCase Convention (in C#)

Coming from C++ world I am a bit disturbed with how things written/named in C# world. The IDE, code samples, books write something that I have been learn to avoid : write function name starting with capital.

I used to follow a convention that only use name beginning capital very sparingly i.e: for Class names. Suddenly seeing capitals everywhere got me wondering didn't they read stroustrup books and other C++ guru?. Overtime I learn to accept it although I still many times, by reflex, naming properties and functions starting with lower case but I can live with generated code and keep up with whatever convention already existing in the code I am working on.

I mainly thought there some kind of convention that these guys are following although I haven't read it explicitly somewhere. Until today, when reading book "C# in a Nutshell, 3rd Edition", I stumble upon this

By convention, arguments, local variables, and private fields should be in camel case (e.g., myVariable ), and all other identifiers should be in Pascal case (e.g., MyMethod ).

I still wonder where there's come from initially, but at least I now saw it written explicitly that, somehow, it's a convention. Thinking that all those people "incidentally" have the same urge to broke the rules and naming things by Capital Letter is kinda creepy, so it's better like this :).

I don't mind following them, although my brain now have to keep two convention inside it since I still keep the other convention in C++. There's still some nagging feeling that you broke something sacred when you try to start name of something beside class with capital in C++.

PascalCase is ok, (Lower)camelCase is ok too, as long as it gets the job done, I guess.

Diposting oleh

Hafiz

di

Thursday, March 13, 2008

0

komentar

![]()

Label: coding

Monday, March 10, 2008

Using Jude : Seperate Model and View

Here's one way of using it that is quite useful to me : make a separate model and diagram folder. I put all the UML components e.g: Class, under model and make all diagrams under diagram folder. With this I could reuse the same classes definition on several different diagrams. Any change to the class will be reflected automatically to others.

It's a bit more work since you have to do things in several steps :

- add any model under folder model e.g: right click on the folder -> Create Model -> Add Class

- Open the diagram and drag the Class there from structure view

It's not always seems practical to do so though, for quick "drawing" I just use the toolbar and let everything e.g: classes and diagrams on the same folder. However, for relatively serious UML Diagramming separating models and diagrams is more convenient.

Diposting oleh

Hafiz

di

Monday, March 10, 2008

0

komentar

![]()

Label: agile

Thursday, March 06, 2008

Using (Manually-Generated) UML to Track Code's Conceptual Growth

One of the usefulness of drawingUML ourselves (as oppose to rely on automatically-generated ones) is to track classes growth (it's numbers, associations among themselves). It's refreshing to occasionally pause a little from coding work and update those dusty old diagrams that we've updated last week/month. It helps us to review and maintain the integrity of the code and, somehow, feel still in control of all of it.

There are things that does not appear on source code or IDE in apparent manner and without tracking in high level view there can be trouble related to conceptual integrity of the code that creep up and accumulate over time. Even with lots of features that help to get a high level view of classes e.g: ctag-based tools, or automatically generated diagrams, nothing can replace manually-drawn visual diagram role on this.

Somehow it's not really about the diagram itself but the act of clarifying what's inside our head in a more visible manner for us to digest, the byproduct, the diagram, is just a nice bonus. It's not really the diagram that we've updated but it's our view about the whole thing that got refreshed periodically. It gives fresh view and the feel that we are still in-touch with the code. Also, in the process where we see, stare (and reflect) on associations that could fuel another insights, breakthroughs or just clarifying our already-outdated understanding.

Conceptual integrity can deteriorate over time and taking occasional visual view could help in dealing with it.

Diposting oleh

Hafiz

di

Thursday, March 06, 2008

0

komentar

![]()

Label: coding

Tuesday, March 04, 2008

Script/Executable-Based Application

There is a certain model application that function as a wrapper to an already-established/existing script/executable. I already stumble upon many applications written this way, even wrote one myself sometime ago (a gui wrapper for certain cli app in-house). There are even application that is written from the start by writing scripts first then wrap them in application-style user interface e.g: kalva.

It's not a sin, of course, to write this kind of application. I think it's a good way to go given conditions :

- The executable/script is well-established

- There's no library that wrap the functionality in a modular way

- You have little time on your hand

Which ideally can be made more modular and testable architecture like below :

If you have the resources and chance your 2-tier application can be made to evolve like the above. You start by extracting the executable into library step by step until all the necessary logic are on the lib and the script is merely a caller to it. Once you have library, you can link your application to it which make your app relatively cleaner and more cohesive.

Still, despite my preference for cleaner, more cohesive application, Script/Executable-Based Application is quite an interesting approach to rapidly develop something and/or reuse an established CommandLineInterface.

Diposting oleh

Hafiz

di

Tuesday, March 04, 2008

0

komentar

![]()

Label: miscellaneous

Monday, March 03, 2008

Tools for Server-Related Development

Here's some (free) tools I use in server-related development in Windows. The combination of them ease the development considerably, almost like all related-things are on local.

- Putty. This one is the most basic. It's an SSH client, got you connected to the shell where you can do virtually everything on the server. However, for operation that related to file/directory management, you'll need...

- WinSCP. It's an SFTP/FTP client with the look and feel of midnight commander, very handy for file/directory-related operation. It has a large number of feature that I find really useful e.g: shell command execution (you send the whole command instead of typing each character to server), directory synchronization (this sync feature has large number of option with convinient interface).

- JEdit with FTP and SshConsole plugin. Despite it's name, FTP plugin could also connect using SFTP and enable you to deal with the file in the server like you do with the local one. SshConsole work together with the FTP Plugin to enable you to send command with the current opened dir as working dir. You don't know what you've missed until you try this one. It's very helpful if you edit lots of files on the server e.g: working with several configuration files. Within JEdit, almost every aspect of editing file on the server feels like working the local ones, from browsing/opening, speed (it's cached on local buffer), savings, highlighting, history, etc.

- NetLimiter free version. The free one enable you to monitor the traffic of your connection e.g: how many bandwidth certain application used, overall bandwidth usage also the detail of what kind of connections (ip, port) that certain application open.

- Whiteshark. Very advanced tool to monitor your network packets. It takes time to get used to this one, but really useful once you get the hang of it.

Diposting oleh

Hafiz

di

Monday, March 03, 2008

0

komentar

![]()

Label: system

Wednesday, February 27, 2008

No "clear" Command in Cygwin Default Installation

My Cygwin installation does nothing when I type "clear", it say it does not know this command or something. It is quite basic operation to be unavailable. Oh well, that's just life, nothing that a little googling couldn't help.

This blog's post clears up things for me : I need to install ncurses package. It is fixed now but I still think it should be available by default though.

Diposting oleh

Hafiz

di

Wednesday, February 27, 2008

26

komentar

![]()

Label: system

Monday, February 25, 2008

The Useful "tail -f"

"tail" is a program in UNIX-like system to print a little part of the end of a file (it's counterpart is easy to guess : "head" to print the beginning instead). At first, I didn't find this program anything special, just some program that I think I might use someday when I need it.

I find a more useful use of it when quite a long time ago I try to setup my tv-tuner to work on linux , which is quite painful to do at that time. Thanks to one reference that on the net (which I lost the link, unfortunately) that use "tail -f" to view the change in log file in realtime (it was used to watch the loading/unloading of the driver to see the effect of different parameters, pure "fun" ;) ), I got myself one very handy tool to watch log file (or any file with the same nature) since then.

When you have trouble with servers or system in general, you could troubleshoot better with this. However, "tail -f" could be really handy for programming too. When you want to trace program as a work around when you have some problem to show them in screen, just print them to file and watch it's changes/appending in realtime and you can avoid having to scan the whole log file after-the-fact.

It's available on most of the system e.g: Cygwin for windows. So, you can rely on it most of the time.

Diposting oleh

Hafiz

di

Monday, February 25, 2008

0

komentar

![]()

Label: application

Wednesday, February 20, 2008

Refactoring to Make Code More Human Readable, a Little Example

I code a little tool in .Net today and need to initiate something different based on whether the second argument is given or not. The program would go something like (to protect the innocent, I'll use very generic name here) :

myProgram arg0

or

myProgram arg0 arg 1

First, doing the simplest things first, the arguments handling goes something like

1:

2:if (args.Length > 1)

3:{

4: //initiate something the standard way

5:}

6:else

7:{

8: //initiate diffrently

9:}

10:

Its works ok but the conditional above left something to be desire, so I refactor it by extracting checking argument length into function :

1:

2:if(!supportFileIsGiven(ref args))

3:{

4: //initiate something the standard way

5:}

6:else

7:{

8: //initiate things differently

9:}

10:

Suddenly the code become a more readable english. It tells more story and deliver meaning. Given a choice, I'll choose code with the style of the second one to work on anytime of day.

Diposting oleh

Hafiz

di

Wednesday, February 20, 2008

1 komentar

![]()

Label: coding

Tuesday, February 19, 2008

Java, C# and the Case of Inferior Look

Building application is a lot of work and dealing with low level issue of OS API makes it worse. Relatively high-level language like Java and C# (I haven't seen GUI-based on language like Ruby application widely used yet) could help to enhance productivity on this.

However, despite the already long evolution, those languages has not yet fully capable to give competitive result in terms of user interface. Java-based GUI is still looking inferior compare to natively written application. Even in Windows , where it seems to have the best integration, it still feel odd here and there. It's quite unfortunate since it's relatively good/easy language to build application on.

Then along the way come .Net (C#). This one is a little bit better, at least if what you are trying to do is developing things for Windows. It has the easiness of Java but quite native feeling of the interface. For quick application programming with fancy interfaces it provide what mostly needed to get the job done. It is still has some holes here and there, but fortunately for this one, the interoperability to native code is quite excellent with lots of third party component to fill in the gap.

All is not lost in Java side though since there's SWT to the rescue. I think people seriously looking to develop application in Java but still want to be competitive in the user interface department should put this into their toolbox. It still has some issues though, but it has good approach which lately Java has start to adopt in recent releases, i.e: more native widget rendering.

There's a tradeoff in doing abstraction and generalization as we see in the case of programming in VM-based language. It create some gap in areas outside the intersection and generalization that is being taken. But the recent effort has getting closer to close/bridge those gap.

There's another path someone could take to avoid having "inferior" look to his app but still a little more productive than having to access OS API directly. It's the bottom up way : C++ Wrappers. Instead starting with easiness/abstraction and move down to take care of nativeness details, you could start with having nativeness first and work on having easiness/abstraction. There are lots of various C++ wrappers out there that could help with this e.g: QT.

Take your pick then, it's the world full of options and you don't have to always use something suboptimal, given by the standard lib, for the look if you don't want to. That being said, I still enjoy JEdit, Freemind and JUDE despite their look feels out of place a little bit. So, I guess the substance is still the king although the look could really help too.

Diposting oleh

Hafiz

di

Tuesday, February 19, 2008

0

komentar

![]()

Label: miscellaneous

Monday, February 18, 2008

CScopeFinder, JEdit Plugin for Rapid Code Searching

I use JEdit as my "support" IDE. Sometime I can't do all things in main IDE and sometime situation require side-by-side comparison. There are the kind of situation, among others, that I use JEdit for. It requires me to setup plugins that help in searching symbols and tracing relationships. One of them is CScopeFinder.

The setup is using a very nice built-in PluginManager in Jedit, you just need to look for CScopeFinder and install it. The tricky part is on cscope binary dependencies (the root of source tree to be searched need to be run "cscope -b -c -R" first). In Windows I use cscope binary from here. It has some bugs that confuse me at first but, long story short, it turned out that it has bugs in outputting root path of the resulting csope.out : it add double quote after that path with no matching double quote before. The first line looks like something like this :

cscope 16 e:\the\path\here" ....

I just need to edit the file, delete the offending double quote and it is usable afterwards.

The usage is not as tricky as the installation though : just select the symbol you like to search (double click on the word will do), right click and choose "Find This C Symbol" and the result will popup which has more contextual information then generic searching. It also has "Cscope Stack" window where it keep the result of it's finding to be refered to more conviniently.

I usually use it in combination with the, also very powerful, built-in HyperSearch in JEdit.

I find this plugin quite handy when I work through unfamiliar code which I have to refer to without opening up another instance of IDE just to get the cross searching capability or adding more burden to the already-crowded currently running IDE. It's a bit outdated (the plugin as well as the csope-win32) but still useful nonetheless.

Diposting oleh

Hafiz

di

Monday, February 18, 2008

1 komentar

![]()

Label: application

Friday, February 15, 2008

Pocket Freemind, Freemind on PocketPC

PocketFreemind is the first open source (and hopefully not the last :) ) project I've joined and the only one I took so far. I took a plunge since I do many critical stuff in Freemind and need them to be mobile. The state of PocketFreemind at that time is quite usable but it missing some feature that is quite substantial to the personal-semantic-convention that I use in the desktop version e.g: red and green font, icons.

PocketFreemind is the first open source (and hopefully not the last :) ) project I've joined and the only one I took so far. I took a plunge since I do many critical stuff in Freemind and need them to be mobile. The state of PocketFreemind at that time is quite usable but it missing some feature that is quite substantial to the personal-semantic-convention that I use in the desktop version e.g: red and green font, icons.

I took some time to catch up and start adding the needed feature (thanks for Peter Carol that has started this project and already code some quite easy-to-work-with codebase). It was really fun and interesting (got exposed to windows mobile development). After I got the very-needed feature I needed, I took the time off due to, as usual, work and life.

I am still looking forward for some more spacious time to start coding it again. There are still some features I had floating in my mind that I really like to have it implemented and there's also the Freemind 0.9.0 support e.g : node with attributes that will be really interesting to explore.

It still uses what's is provide by .net regarding TreeView and Node, that's why you still see something funny like square block on node without icons (actually, without node it will be blank, but I add a blank square icon for node without icon to help with the visual since it will look really wacky without it). More exploration on doing custom drawing of node could, at least potentially, make things prettier.

So, if you got the need to sync your freemind file with your PocketPC, give it a try.

Diposting oleh

Hafiz

di

Friday, February 15, 2008

0

komentar

![]()

Label: application

Tuesday, February 12, 2008

Budget Time with Time Block

How do we materialize our priority in our daily life? in the case of money people make budgets, in the case of time I think make routine time blocks would do it.

When we spend without budget and bookkeeping we usually end up wondering where the hell those money gone.Similarly, without allocating exclusive time to do something routinely I don't think we can feel sure we have already "walk our talk". When I say coding should occupy 80 percent of programmers time, then I would need to prepare an 80 percent of my working time to do just that with as minimum fragmentation as possible. For other responsibility I would then need to allocate another block to do them in batch thus minimize the overhead of context switching.

Here's an example of the time blocks :

- 9:00 - 12:00. Code : the most critical tasks

- 13:00 - 15:00 Code 2 : more explorative ones or continuing stuff from block 1

- 15:00 - 17:00 Mails and anything else

This requires that our tasks be pooled into certain system based on the block/context e.g: GTD, so when we are in certain block we pick a task only from that pool. Anything come our way when we are in certain block should be pooled into this system to be done later on it's block comes.

Of course, like diet, this would require someone to be committed to it if he want to see some difference. But unlike diet, people won't get fat if they cross the line, at least not directly (you know : missed deadline -> stressed -> eat a lot -> gain more weight). However, if you commit in doing it other people will start to pickup your rythm and will adapt to it and it will make things easier by time.

Allocate exclusive time block should be natural if someone is sure about what he think is important. It won't always means easy but the gain is worth it : steady progress in the area that is important can become something more common and not something that come by occasionally.

Diposting oleh

Hafiz

di

Tuesday, February 12, 2008

0

komentar

![]()

Label: effectivity

Monday, February 11, 2008

Vista ATI Display Driver Problem

Update (2008-06-08) : I updated to version 8.476.0.0 (28/03/2008) and the problem is no longer there. Case closed.

I have used Windows Vista several months now. Despite it's several shortcomings (and bad reviews around the net) it is quite usable in my case. However, one thing still really annoy me : the ATI display driver problem. Occasionally the screen would go blank and the message below showup :

I usually can get back to work fine after it happen. However, using recent drivers the problem is really bad, the driver reset would go on several time and ended up with the blue-screen-of-death (haven't seen this for quite a while before using Vista). I am currently stuck with driver version 8.390.0.0 which come from June 2007, otherwise the bluescreen would appear twice a day minimum.

I have tried any appearing driver updates, so far it's not solved yet. It's not that a major problem though, I can still work fine (old driver that works is enough for now), it's just that I think I can sympathize better with people having problem with this OS :).

Diposting oleh

Hafiz

di

Monday, February 11, 2008

0

komentar

![]()

Label: system

Wednesday, February 06, 2008

Application Level Programming in C++

C++ is a language that you could twist to almost any form of programming (with a cost, of course). You could program with low-level-ness that is only one step away from assembly language and on the other extreme you could build the huge common-user-centered application with all it's bells and whistles. Here I would like to elaborate about the later.

Now that we have Java and C#, it's hard not to think application-level programming without getting them into the discussion. Doing the same thing as C# code would then mandate the C++ programmer to at least review factors that makes them widely-adopted (and even better to try them deep enough to really "get" it) and take some lesson.

That being said, I think to be able to compete in application-level programming using C++ i.e: use C++ to get the performance and flexible lower level API but still able to avoid getting stuck and wasting time in micro problems (pointer anyone?), is to "emulate" the virtual machine e.g: JVM, .Net.

Clearly, what I mean by emulating is not to make another VM or code a scripting a language (most language written in C/C++ anyway). What I mean is to prepare the environment, libraries, framework, tools, build system, etc.. so that you only concern with matters that most people using language like Java is concern too with other thing handled by your manually-crafted VM. Those things should be assimilated, glued and setup with rigidness to the point that we can take them for granted to be working and the use of them is made semi-automatic in our daily typing/coding.

With existing components available now, you could already emulate almost what the Virtual Machine offer albeit with a little more hard work on your part. If you think the gain is worth it then many things already available to you.

For example, using naked new and delete can be considered suicide if your aim is to make an application (hint : boost::shared_ptr). Iterating collection using Functor or using old-style for (incrementing index) would make you hard to match readability of C# code (hint : boost::lambda). Manually editing Makefile if you aim to make big application could cripple your speed when something messed up with the makefile along the way or it become an unmaintainable monster (hint : CMake, Rake, Ant). There are already libraries and tools to help you match those kind of things that many other offer in VM-based language.

Just because you have to use C++ does not mean you can not use conceptualization, abstraction, modularization that is used by higher level languages and put them to good use in your own case and situation.

Diposting oleh

Hafiz

di

Wednesday, February 06, 2008

0

komentar

![]()

Label: coding

Tuesday, February 05, 2008

Track Coding Session with Freemind and JEdit

Coding session is an activity that could fill your brain resources pretty quickly. You need to hold a lot of things in your head at one time. I find this tiring and stressful if not being helped by some tools to act as process scheduler and/or swap "device" so my RAM could have lots of space for the micro task.

I use mainly Freemind and JEdit for this (in conceptual level : a Mindmapping and Text Editor application) with pencil and paper ready for emergency. The use of Freemind is basically similar to what I wrote about tracking internet search only in this case it is used to track task, subtasks, subsubtasks, subsubsubtaks while coding. JEdit is handy as a "buffer" for codes, notes and other things that is less hierarchical in nature.

Some trick I find helpful so far :

- Don't branch out too deep. Try to make spawned task as a sibling instead of a child of current task, if possible.

- Try to do one task within one hour or two hour max. Keep subtask/subtask, if any, within 15-20 minutes. I use Activity Timer gadget to help with this.

- Use shortcuts, colors, icons to make the overhead of entering/typing small but still maintaining clarity.

- Attach the elapsed time of a task for evaluation. It's a good source for better estimating later and for better divide-and-conquer skill. Here's my own convention look like :

- Dump code snippets, notes, deleted code to JEdit, it could be useful later. I also find this better, more flexible and more dependable than to rely solely on undo/redo of IDE.

Diposting oleh

Hafiz

di

Tuesday, February 05, 2008

0

komentar

![]()

Label: coding

Monday, February 04, 2008

Combining Trac and XPlanner

I wrote sometime ago about how using issue/bug tracker (Trac) and progress tracker (XPlanner) is really helpful, at least in my case. It will be really good if those two really integrated, but unfortunately it's not the case right now. We need to "integrate" them manually.

Here's the main conceptual merging I have made between them to support the concepts I am using :

Tickets is Story, Story is Ticket

From here on it's like directing the flow of detail that is stuck in Ticket in Trac, connect it and let it trickle down in to more detail through Story "hole" in XPlanner. Every Story is a Ticket being done.

We could have best of both worlds with this connection. On the trac side we have more strategic view of the Ticket/Story (Milestone, Roadmap, Wikis) and on the XPlanner side we have Progrress/Development detail regarding it (Burndown chart, tasks drilldown, assignments, timesheet).

Some useful mechanism regarding this :

- Make a wiki shortcut in XPlanner to Trac so in xplanner you could type something like 'wiki:ticket/555' or 'wiki:changeset/555' XPlanner and it's wiki system will link it to the Trac's entry. We could do interesting with this like make notes in Task entry that link to certain subversion revision.

- Use 1 to n mapping from Trac's Milsestone to XPlanner's iteration. Pick story to be done in certain iteration from it's parent Milestone.

- Let all the detail concerning the Ticket (notes, references, updates, troubles) updated in Trac to avoid duplication or confusion. Position XPlanner more for scheduling, timesheet and log-like notes.

Diposting oleh

Hafiz

di

Monday, February 04, 2008

2

komentar

![]()

Label: agile